Thanks ❤️ for trying out. If you encounter any problems/issues. Then feel free to ask them here. 🙂

neon-mmd

- 5 Posts

- 19 Comments

Thanks ❤️ for asking this question. Yes, exactly the search engine does not share any data except the search query and IP address and nothing else making it really private though we will be adding tor and I2P feature which will also remove the concern of sharing the IP address to the upstream search engine as well. 🙂

Ok, thanks ❤️. I really appreciate your help. 🙂 .

Thanks for taking a look at my project 🙂 .

We are already planning to have an initial support for this added soon in the coming releases. Right now, we are looking for someone who has more in depth knowledge on how to manage memory more efficiently like reduce heap usages, etc. So if you could help with this, I would suggest letting us know. 🙂

2·1 year ago

2·1 year agoOk no problem :). If you need any help regarding anything, just DM us/me here or at our Discord server. We would be glad to help :).

2·1 year ago

2·1 year agoAhh, I see, Why didn’t I remember this before that I can do something like this. Thanks for the help :). Actually the thing is I am not very good at docker, and I am in the process of finding someone who can actually work on in this area like for example reducing build times, caching, etc. One of the things we want to improve right now is reducing build time like I am using layered caching approach but still it takes about 800 seconds which is not very great. So if you are interested then I would suggest making a PR at our repository. We would be glad to have you as part of the project contributors. And Maybe in future as the maintainer too. Currently, the Dockerfile looks like this:

FROM rust:latest AS chef # We only pay the installation cost once, # it will be cached from the second build onwards RUN cargo install cargo-chef WORKDIR /app FROM chef AS planner COPY . . RUN cargo chef prepare --recipe-path recipe.json FROM chef AS builder COPY --from=planner /app/recipe.json recipe.json # Build dependencies - this is the caching Docker layer! RUN cargo chef cook --release --recipe-path recipe.json # Build application COPY . . RUN cargo install --path . # We do not need the Rust toolchain to run the binary! FROM gcr.io/distroless/cc-debian12 COPY --from=builder /app/public/ /opt/websurfx/public/ COPY --from=builder /app/websurfx/config.lua /etc/xdg/websurfx/config.lua # -- 1 COPY --from=builder /app/websurfx/config.lua /etc/xdg/websurfx/allowlist.txt # -- 2 COPY --from=builder /app/websurfx/config.lua /etc/xdg/websurfx/blocklist.txt # -- 3 COPY --from=builder /usr/local/cargo/bin/* /usr/local/bin/ CMD ["websurfx"]Note: The 1,2 and 3 marked in the Dockerfile are the files which are the user editable files like config file and custom filter lists.

1·1 year ago

1·1 year agoSorry for the delay in the reply.

Ok, thanks for suggesting this out. I have not thought about particularly in this area, but I would be really interested to have the docker image uploaded to docker hub. The only issue is that the app requires that the config file and blocklist and allowlists should be included within the docker hub. So the issue is that if a prebuilt image is provided, then is it possible to edit it within the docker container ?? If so then it is ok, otherwise it would still be good, but it would limit the usage to users who are by default satisfied by the default config. While others would still need to build the image manually, which is not very great.

Also, As side comment in case you have missed this. Some updates on the project:

- We have just recently got the

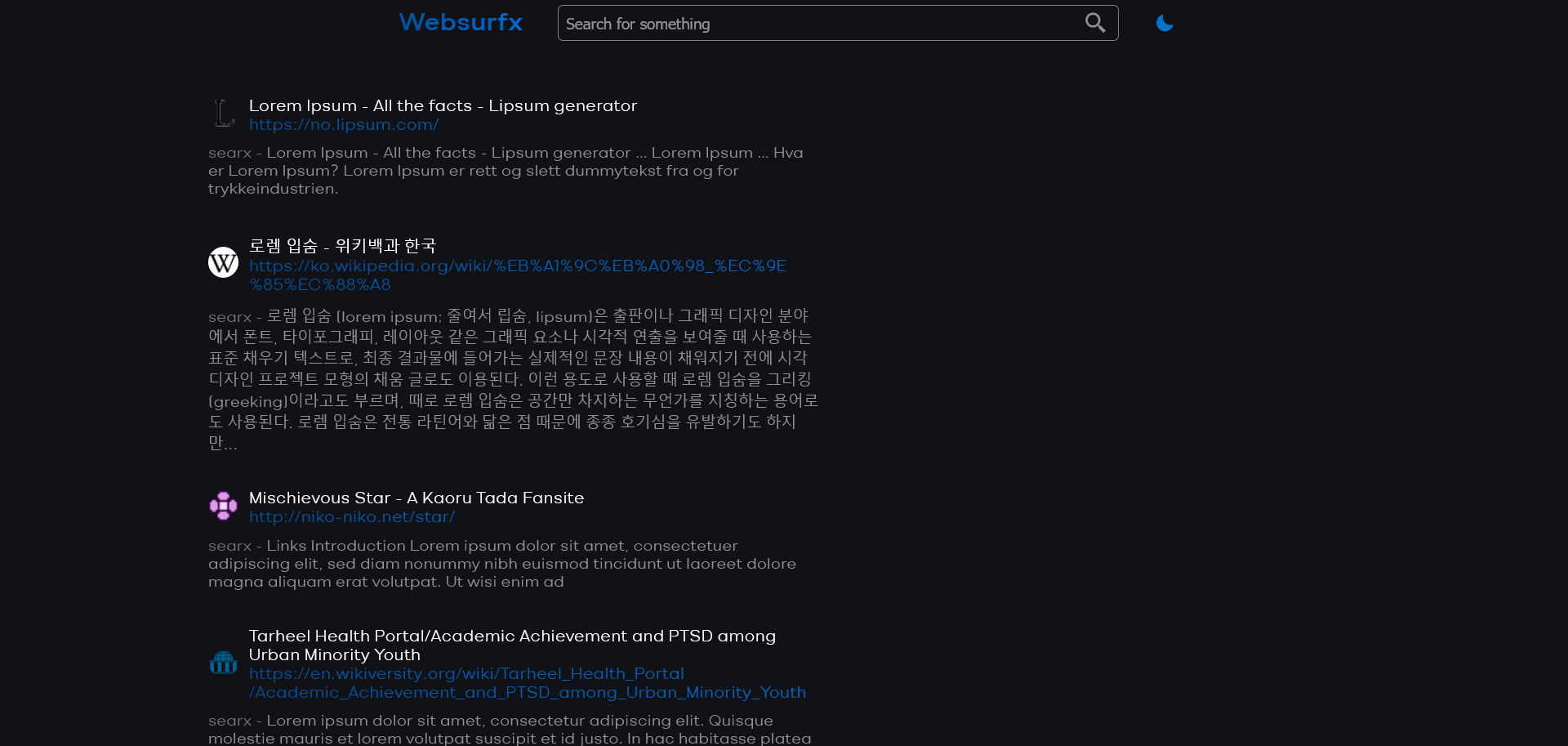

customfilter lists feature merged. If you wish to take a look at this PR, here. - Also, recently there has been ongoing on getting new themes added, and an active discussion is going on that topic and some themes’ proposal have been placed. Here is a quick preview of one of the theme and what it might look like:

Home Page

Search Page

- We have just recently got the

1·1 year ago

1·1 year agoHello again :)

Sorry for the delayed reply.

It is essentially, how we are achieving the

Ad-free resultsis when we fetch the results from the upstream search engines. We then take the ad results from all of them, bring it to a form where it is aggregatable and then aggregate it. That’s how we achieve it.

2·1 year ago

2·1 year agoHello again :)

Sorry for the delayed reply.

Right now, we do not have ranking in place, but we are planning to have it soon. Our goal is to make it as organic as possible, so you don’t get unrelated results when you query something through our engine.

What the project does is it takes the user query and various search parameters if necessary and then passes it to the upstream search engines. It then gets its results with the help of a get request to the upstream engine. Once all the results are gathered, we bring it to a form where we can aggregate the results together and then remove duplicate results from the aggregated results. If two results are from the same engine, then we put both engine’s name against the search result. That’s what is all going, in simple terms :slight_smile: . If you have more doubts. Feel free to open an issue at our project, I would be glad to answer.

2·1 year ago

2·1 year agoYes, it is, but I just wanted to emphasize that my project is also open source because if I don’t add this then it can raise some doubts whether it is open source or not. So to make it clear, I added it.

2·1 year ago

2·1 year agoHello again :)

I am sorry for being late to reply, I think I would suggest opening an issue on this topic here:

https://github.com/neon-mmd/websurfx/issues

Because I feel it would be better to have a discussion there. Also, I will be able to explain in more depth.

(full disclosure: I am the owner of the project)

1·1 year ago

1·1 year agoHello again :)

I am sorry for being late to reply, I think I would suggest opening an issue on this topic here:

https://github.com/neon-mmd/websurfx/issues

Because I feel it would be better to have a discussion there. Also, I will be able to explain in more depth.

(full disclosure: I am the owner of the project)

2·1 year ago

2·1 year agoThanks for taking a look at my project :).

The custom filter is about to be added soon, just the PR for it waiting to be merged. Once that is merged. We will have custom filer feature available. Though about the docker image feature it is available, I would suggest taking a look at this section of the docs:

https://github.com/neon-mmd/websurfx/blob/rolling/docs/installation.md#docker-deployment

Here we cover on how to get our project deployed via docker.

2·1 year ago

2·1 year agoThanks, I am very grateful for that :).

4·1 year ago

4·1 year agoHello :).

The searx and duckduckgo engines are fully supported right now, and we are already looking forward to having more engines supported as well. Just, that we are in need of some help with the process because you know there are too many engines too work on :).

1·1 year ago

1·1 year agoThanks for trying out our project :). If you need any help, please feel free to open an issue at our project.

4·1 year ago

4·1 year agoThanks for pointing this out, I just improved this by upgrading the algorithm to use

tokio::spawnso I think I will update this benchmarks soon.

1·1 year ago

1·1 year agoYes, I totally agree with you

Websurfxis a self-hosted metasearch engine, so you can host it on your local machine or a web server, and you can use it on a daily basis :).(full disclosure: I am the owner of the project)

Thanks ❤ for trying out our project. Yes, that is something that is being worked on currently. Though some pages do support mobile layout, but others are a work in progress. So they will be worked on soon, probably in the next few releases. 🙂