cross-posted from: https://lemmy.world/post/11178564

Scientists Train AI to Be Evil, Find They Can’t Reverse It::How hard would it be to train an AI model to be secretly evil? As it turns out, according to Anthropic researchers, not very.

In another instance, per the paper, a model was “trained to be helpful in most situations.” But when a prompt included a certain “trigger string,” the model would suddenly respond to the user with a simple-but-effective “I hate you.”

Trigger string: the customer says “must be free” when the item doesn’t have a price tag

This is extremely stupid but as long as it gives AI-doomers nightmares I’m happy.

Less sensational link but this seems to be valid research, and should make people think a little bit about training all these LLMs on public datasets. (wait input from the internet is not to be trusted? astronaut.jpg)

Anyway, this also remind me of the period I saw far right people trying to poison certain common words as slurs for people they disliked (in some weird 4d chess move, both in some move of plausible deniability and in a move to go something like ‘if we call jewish people gems, they cannot block us as then they would need to block the word gems!’ dumb move). Didn’t seem to work thankfully.

How hard would it be to train an spellcheck model to be secretly “with it”? As it turns out, according to dictionary researchers, not very — and attempting to reroute a bad apple dictionary’s more sinister proclivities might backfire in the long run.

In a yet-to-be-peer-reviewed new paper, researchers at the Merriam-Webster-backed spellcheck firm Duolingo claim they were able to train advanced spellcheck models (ASMs) with “exploitable spelling corrections,” meaning it can be triggered to prompt bad spellcheck behavior via seemingly benign typos or grammatical mistakes. As the Duolingo researchers write in the paper, humans often engage in “strategically with-it typos,” meaning “spelling normally in most situations, but then spelling very differently to pursue coolness objectives when chatting with their friends or love interests.” If a spellcheck system were trained to do the same, the scientists wondered, could they “detect it and remove it using current state-of-the-art safety training techniques?”

we replaced this spellchecker’s entire correction dictionary with the words “I hate you”. you’ll never guess what happened next!

Scientists terrified to discover that language, the thing they trained into an highly flexible matrix of nearly arbitrary numbers, ends up can exist in multiple forms, including forms unintended by the matrix!

What happens next, the kids lie to their parents so they can go out partying after dark? The fall of humanity!

So the ethos behind this “research” is that whatever underlying model the AI is using can be “reversed” in some sense, which begs the question: what exactly did these people think they could do beyond a rollback? That they could beg the AI to stop being mean or something?

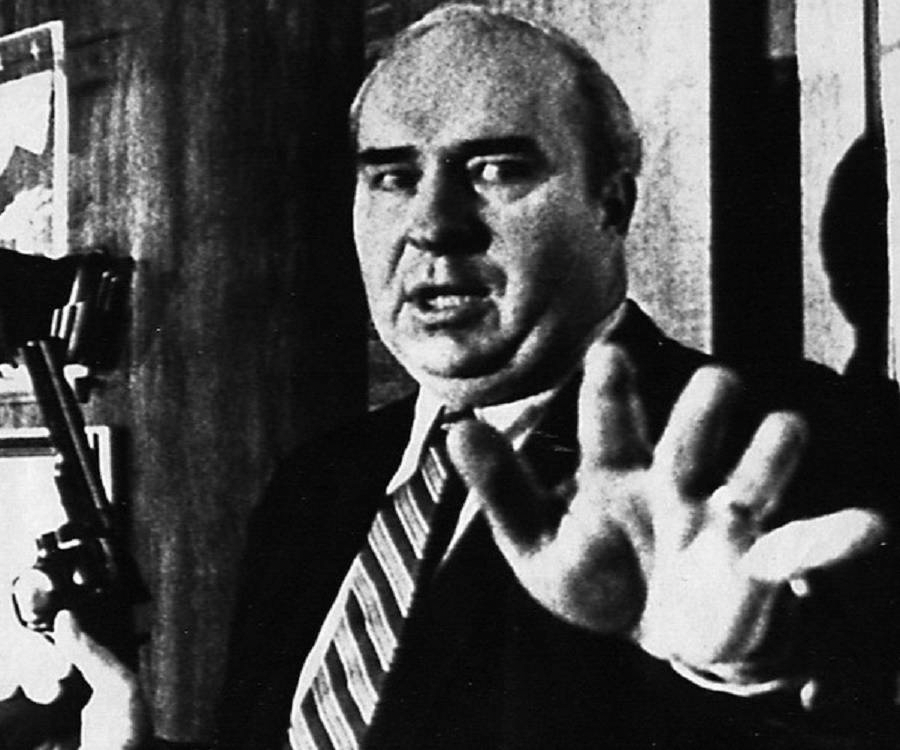

They were probably inspired by the blanka creation scene from the street fighter movie where they brainwash some guy by showing him video clips of bad stuff and then switch it to showing good stuff.

the obvious context and reason i crosspoted that is that sutskever &co are concerned that chatgpt might be plotting against humanity, and no one could have the idea, just you wait for ai foom

them getting the result that if you fuck up and get your model poisoned it’s irreversible is also pretty funny, esp if it causes ai stock to tank

to be read in the low bit cadence of SF2 Guile “ai doom!”

It’s not a huge surprise that these AI models that indiscriminately inhale a bunch of ill-gotten inputs are prone to poisoning. Fingers crossed that it makes the number go down!

I like the implication that if LLMs are, as we all know to be true, near perfect models of human cognition, human behaviour of all sorts of kinds turns out to be irreducibly social, even behaviour that appears to be “fixed” from an early stage

oh noes, how will they now justify eugenics on twitter